Waldorf Graduates Pursue Meaningful Careers

Will My Child Succeed After Waldorf High School? The Research Says Yes

Choosing a high school is a critical decision, and parents often wonder: Will this education prepare my child for college, career, and life?

For families considering Waldorf high schools, this question is especially relevant. With experiential learning, seminar-style discussions, and an interdisciplinary curriculum, can Waldorf truly equip students for fields like medicine, law, technology, and business?

Decades of research say yes.

Waldorf Graduates Excel in Higher Education and Careers

A 60-year study (Survey of Waldorf Graduates, Phase II, Mitchell & Gerwin, 2007) found that:

- 94% of Waldorf graduates attend college.

- 42% major in science-related fields—more than double the national average.

- Many earn advanced degrees in medicine, law, engineering, business, and the arts.

- Alumni thrive in fields ranging from finance and research to entrepreneurship and sustainability.

Waldorf Alumni Spotlight: Science in Action

After earning a degree in Earth Systems Engineering from the University of Michigan, Gavin Chensue ('06) joined the NOAA Commissioned Officer Corps, leading critical climate studies worldwide.

"Steiner education gave me a taste of everything. When I needed direction in college, I remembered how much I loved studying weather and geology—and that led me to where I am today." - Gavin Chensue (RSSAA '06), Research Engineer at SRI International, Former NOAA Corps Officer

His story highlights how Waldorf graduates succeed in STEM, combining curiosity and real-world problem-solving to make a global impact.

Read the full studies:

- Survey of Waldorf Graduates, Phase II: https://www.waldorfeducation.org/research

- Into the World Study: https://www.waldorfeducation.org/graduate-outcomes

What Makes a Waldorf Education So Effective?

Contrary to the belief that test-driven education leads to success, research shows that employers and universities highly value graduates who:

✔ Think critically and independently

✔ Communicate clearly and persuasively

✔ Collaborate effectively

✔ Solve complex problems

✔ Adapt to a changing world

These are precisely the skills Waldorf high schools cultivate.

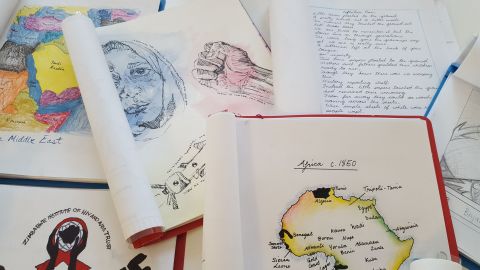

1. Depth Over Memorization: Lifelong Knowledge Retention

Rather than rote memorization, Waldorf fosters deep understanding:

- History is explored through primary sources and narratives.

- Science emphasizes hands-on experiments and independent research.

- Mathematics focuses on real-world application.

- Literature and philosophy encourage analytical discussions.

One employer noted:

“Waldorf students don’t just look for the right answer—they explore the why and how behind complex issues.”

2. Seminar-Style Learning Develops Exceptional Communicators

Waldorf emphasizes oral presentations, debates, and collaborative discussions, preparing graduates for leadership roles in law, finance, business, and medicine.

Dr. Ilan Safit, co-author of *Into the World*, states:

“We consistently hear that Waldorf graduates excel at teamwork and leadership. Their ability to articulate complex ideas is a major asset.”

A study from Da Vinci Waldorf School found that Waldorf students select careers based on values and passion rather than prestige or income.

Final Thoughts: The Research Speaks for Itself

Professors and employers highlight Waldorf graduates’ intellectual curiosity and ability to think independently.

With strong college attendance rates, success across multiple professions, and the skills needed to navigate a complex world, one thing is clear:

Waldorf graduates don’t just succeed—they thrive.

Professors and employers highlight Waldorf graduates’ intellectual curiosity and ability to think independently.

With strong college attendance rates, success across multiple professions, and the skills needed to navigate a complex world, one thing is clear:

Waldorf graduates don’t just succeed—they thrive.

Read more on Waldorf Graduates

Education in the Age of A.I.

How would you design an education system that helps students flourish in the face of changes to the nature of work brought on by artificial intelligence? The rise of ChatGPT and other AI large-language models have brought this question to the forefront of parents and educators' minds. Waldorf education is uniquely focused on developing children’s creativity, cultural competency, imagination and original thinking. We believe that teaching students to be able to articulate their own diverse viewpoints as well as their understanding of the material sets our students up for future success. In a future where AI-generated content relies on recombining existing work, our graduates' abilities to think critically, divergently, and creatively will serve them well.

ChatGPT Is Dumber Than You Think: Treat it like a toy, not a tool.

This article was originally written by Ian Bogost and published in The Atlantic

As a critic of technology, I must say that the enthusiasm for ChatGPT, a large-language model trained by OpenAI, is misplaced. Although it may be impressive from a technical standpoint, the idea of relying on a machine to have conversations and generate responses raises serious concerns.

First and foremost, ChatGPT lacks the ability to truly understand the complexity of human language and conversation. It is simply trained to generate words based on a given input, but it does not have the ability to truly comprehend the meaning behind those words. This means that any responses it generates are likely to be shallow and lacking in depth and insight.

Furthermore, the reliance on ChatGPT for conversation raises ethical concerns. If people begin to rely on a machine to have conversations for them, it could lead to a loss of genuine human connection. The ability to connect with others through conversation is a fundamental aspect of being human, and outsourcing that to a machine could have detrimental side effects on our society.

Hold up, though. I, Ian Bogost, did not actually write the previous three paragraphs. A friend sent them to me as screenshots from his session with ChatGPT, a program released last week by OpenAI that one interacts with by typing into a chat window. It is, indeed, a large language model (or LLM), a type of deep-learning software that can generate new text once trained on massive amounts of existing written material. My friend’s prompt was this: “Create a critique of enthusiasm for ChatGPT in the style of Ian Bogost.”

ChatGPT wrote more, but I spared you the rest because it was so boring. The AI wrote another paragraph about accountability (“If ChatGPT says or does something inappropriate, who is to blame?”), and then a concluding paragraph that restated the rest (it even began, “In conclusion, …”). In short, it wrote a basic, high-school-style five-paragraph essay.

That fact might comfort or frighten you, depending on your predilections. When OpenAI released ChatGPT to the public last week, the first and most common reaction I saw was fear that it would upend education. “You can no longer give take-home exams,” Kevin Bryan, a University of Toronto professor, posted on Twitter. “I think chat.openai.com may actually spell the end of writing assignments,” wrote Samuel Bagg, a University of South Carolina political scientist. That’s the fear.

But you may find comfort in knowing that the bot’s output, while fluent and persuasive as text, is consistently uninteresting as prose. It’s formulaic in structure, style, and content. John Warner, the author of the book Why They Can’t Write, has been railing against the five-paragraph essay for years and wrote a Twitter thread about how ChatGPT reflects this rules-based, standardized form of writing: “Students were essentially trained to produce imitations of writing,” he tweeted. The AI can generate credible writing, but only because writing, and our expectations for it, has become so unaspiring.

Even pretending to fool the reader by passing off an AI copy as one’s own, like I did above, has become a tired trope, an expected turn in a too-long Twitter thread about the future of generative AI rather than a startling revelation about its capacities. On the one hand, yes, ChatGPT is capable of producing prose that looks convincing. But on the other hand, what it means to be convincing depends on context. The kind of prose you might find engaging and even startling in the context of a generative encounter with an AI suddenly seems just terrible in the context of a professional essay published in a magazine such as The Atlantic. And, as Warner’s comments clarify, the writing you might find persuasive as a teacher (or marketing manager or lawyer or journalist or whatever else) might have been so by virtue of position rather than meaning: The essay was extant and competent; the report was in your inbox on time; the newspaper article communicated apparent facts that you were able to accept or reject.

Perhaps ChatGPT and the technologies that underlie it are less about persuasive writing and more about superb bull**. A bull**er plays with the truth for bad reasons—to get away with something. Initial response to ChatGPT assumes as much: that it is a tool to help people contrive student essays, or news writing, or whatever else. It’s an easy conclusion for those who assume that AI is meant to replace human creativity rather than amend it.

The internet, and the whole technology sector on which it floats, feels like a giant organ for bull**ery—for upscaling human access to speech and for amplifying lies. Online, people cheat and dupe and skirmish with one another. Deep-learning AI worsens all this by hiding the operation of software such as LLMs such that nobody, not even their creators, can explain what they do and why. OpenAI presents its work as context-free and experimental, with no specific use cases—it says it published ChatGPT just to “get users’ feedback and learn about its strengths and weaknesses.” It’s no wonder the first and most obvious assumption to make about ChatGPT is that it is a threat—to something, to everything.

But ChatGPT isn’t a step along the path to an artificial general intelligence that understands all human knowledge and texts; it’s merely an instrument for playing with all that knowledge and all those texts. Play just involves working with raw materials in order to see what they can do. You play a game, or an instrument, to avail yourself of familiar materials in an unexpected way. LLMs are surely not going to replace college or magazines or middle managers. But they do offer those and other domains a new instrument—that’s really the right word for it—with which to play with an unfathomable quantity of textual material.

with human knowledge.

I talked with ChatGPT about a wide range of topics, including poetry, behavioral psychology, and computer programming, in order to test this theory. Right now, ChatGPT is just a tech demo, a research experiment. Less clear is how it might be used, beyond the dire predictions about what sectors its technology might upend. In almost every case, the AI appeared to possess both knowledge and the means to express it. But when pressed—and the chat interface makes it easy to do so—the bot almost always had to admit that it was just making things up.

Sometimes it simply used templates. When I asked ChatGPT to generate a cover letter for a university job, it obliged in a competent but uninspired way. It also produced exactly the same letter for a job as a magazine editor as it did for a job as a cannabis innovator in the Web3 space (“I have a deep understanding of the web3 space and the unique challenges and opportunities it presents”). Fair enough: Most cover letters are terrible, after all, repeating the same trite lines and abstract gestures.

In other cases, the bot produced creative output that was surprisingly synthetic but riddled with flaws. When I asked it to generate an imagist poem in the vein of Ezra Pound or William Carlos Williams about hamburgers, it obliged with this short refrain:

Juicy patty, sizzling hot

Toasted bun, crisp lettuce and tomato

A symphony of flavors, a feast for the senses

Savory, satisfying, a true

American classic.

Not bad, I guess, especially for a computer. But also, the poem is not really an example of imagism, which strives to evoke a precise image by means of deliberate language (something like, “The dense honesty of / A hamburger.”) So I called ChatGPT on it. “This is not an imagist poem.” Its response is worth reproducing in full:

You are correct, the poem that I generated is still not an imagist poem. An imagist poem uses precise, vivid imagery to convey a specific idea or emotion, and focuses on a single image or moment. The poem that I generated uses a more narrative and descriptive style, and does not focus on a single, specific image. It describes the ingredients and flavors of a hamburger, but does not use precise and vivid imagery to convey a specific idea or emotion. I apologize if my previous responses did not meet your expectations, and I will do my best to assist you with any further questions or prompts that you may have.

I also urged the AI to generate a lai (a medieval narrative poem) in the style of Marie de France about the beloved, Texas-based fast-food chain Whataburger. It sure as heck looked a lot like a lai, beginning like this:

In the land of Whataburger, where burgers and fries are king,

A stranger came to visit, and with him brought a ring.

He wore a cloak of velvet, and a hat upon his head,

And he smiled with a twinkle in his eye as he approached the counter, where the burgers are served hot and fresh, and the fries are crispy and golden.

Except for that last line, which is a metrical mess. The AI wasn’t able (or willing) to evaluate its work (“I am a large language model trained by OpenAI and I don’t have the ability to evaluate the quality of literary works or make aesthetic judgments.”), but it was able to tell me about the typical structure of a lai—a short, narrative poem of the 12th century with a particular structure and form, usually written in octosyllabic couplets. The lyrical errors in the generated lai arise from its failure to adhere to that metrical form.

When I asked about the matter, it admitted again that, no, the lai it had written was not structured in octosyllabic couplets, claiming that it had produced “a more modern and playful take on the form of the lai.” I was starting to feel like I was negotiating with a student who had come to office hours to complain about their grade.

This happened over and over again. I asked for source code for an Atari game about scooping cat litter, and the AI sent me valid programming instructions—it understood the assignment—but only disconnected snippets of actual code with the heading comment “This program creates a simple game where the player must use a scoop to pick up their cat’s litters and put them in a trash bin.” It was an icon of the answer I sought rather than the answer itself.

Then I asked for a set of diagnostic criteria for an invented psychological condition I named “Inherited Truculence.” The AI instantly obliged:

Diagnostic Criteria for Inherited Truculence:

A tendency to experience and express aggressive or hostile thoughts and feelings, particularly in response to perceived threats or challenges.

A history of aggressive or hostile behavior, particularly in interpersonal or social situations.

A familial history of aggressive or hostile behavior, indicating a possible genetic or hereditary component to the condition.

Impairment in social, occupational, or other important areas of functioning as a result of aggressive or hostile behavior.

The symptoms are not better explained by another mental disorder or by the effects of a substance (such as drugs or medication).

Except, truculence doesn’t really amount to “aggressive and hostile thoughts and feelings,” does it? I asked, and the AI agreed, eventually revising its diagnostics accordingly at my further prompting (“A tendency to experience and express defiant or confrontational thoughts and feelings,” and so forth).

Likewise, my prompt to offer both an ontological and an epistemological answer to the question When does bread become toast? yielded a generally fluent, seemingly philosophical reply. It read in part: “when we are able to recognize it as toast based on its appearance, texture, and other sensory characteristics.”

But the philosophy of toast is an area of expertise for me, so I was able to discern that this snippet, supposedly an epistemological argument, was really about perception. “Isn’t the second a phenomenal, and not an epistemological argument?” I challenged. And once more, the AI cried uncle without hesitation: “You are correct, the second argument that I provided is a phenomenal, not an epistemological, argument. An epistemological argument focuses on how we come to know or understand something, whereas a phenomenal argument focuses on our experience or perception of something.”

At this point, talking to ChatGPT began to feel like every other interaction one has on the internet, where some guy (always a guy) tries to convert the skim of a Wikipedia article into a case of definitive expertise. Except ChatGPT was always willing to admit that it was wrong. Instantly and without dispute. And in each case, the bot also knew, with reasonable accuracy, why it was wrong. That sounds good but is actually pretty terrible: If one already needs to possess the expertise to identify the problems with LLM-generated text, but the purpose of LLM-generated text is to obviate the need for such knowledge, then we’re in a sour pickle indeed. Maybe it’s time for that paragraph on accountability after all.

But that’s not ChatGPT’s aim. It doesn’t make accurate arguments or express creativity, but instead produces textual material in a form corresponding with the requester’s explicit or implicit intent, which might also contain truth under certain circumstances. That is, alas, an accurate account of textual matter of all kinds: online, in books, on Wikipedia, and well beyond.

Proponents of LLM generativity may brush off this concern. Some will do so by glorifying GPT’s obvious and fully realized genius, in embarrassing ways that I can only bear to link to rather than repeat. Others, more measured but no less bewitched, may claim that “it’s still early days” for a technology a mere few years old but that can already generate reasonably good 12th-century lyric poems about Whataburger. But these are the sentiments of the IT-guy personalities who have most mucked up computational and online life, which is just to say life itself. OpenAI assumes that its work is fated to evolve into an artificial general intelligence—a machine that can do anything. Instead, we should adopt a less ambitious but more likely goal for ChatGPT and its successors: They offer an interface into the textual infinity of digitized life, an otherwise impenetrable space that few humans can use effectively in the present.

To explain what I mean by that, let me show you a quite different exchange I had with ChatGPT, one in which I used it to help me find my way through the textual murk rather than to fool me with its prowess as a wordsmith.

“I’m looking for a specific kind of window covering, but I don’t know what it’s called.” I told the bot. “It’s a kind of blind, I think. What kinds are there?” ChatGPT responded with a litany of window dressings, which was fine. I clarified that I had something in mind that was sort of like a roller blind but made of fabric. “Based on the description you have provided, it sounds like you may be thinking of a roman shade,” it replied, offering more detail and a mini sales pitch for this fenestral technology.

My dearest reader, I do in fact know what a Roman shade is. But lacking that knowledge and nevertheless needing to deploy it in order to make sense of the world—this is exactly the kind of act that is very hard to do with computers today. To accomplish something in the world often boils down to mustering a set of stock materials into the expected linguistic form. That’s true for Google or Amazon, where searches for window coverings or anything else now fail most of the time, requiring time-consuming, tightrope-like finagling to get the machinery to point you in even the general direction of an answer. But it’s also true for student essays, thank-you notes, cover letters, marketing reports, and perhaps even medieval lais (insofar as anyone would aim to create one). We are all faking it with words already. We are drowning in an ocean of content, desperate for form’s life raft.

ChatGPT offers that shape, but—and here’s where the bot did get my position accidentally correct, in part—it doesn’t do so by means of knowledge. The AI doesn’t understand or even compose text. It offers a way to probe text, to play with text, to mold and shape an infinity of prose across a huge variety of domains, including literature and science and shitposting, into structures in which further questions can be asked and, on occasion, answered.

GPT and other large language models are aesthetic instruments rather than epistemological ones. Imagine a weird, unholy synthesizer whose buttons sample textual information, style, and semantics. Such a thing is compelling not because it offers answers in the form of text, but because it makes it possible to play text—all the text, almost—like an instrument.

That outcome could be revelatory! But a huge obstacle stands in the way of achieving it: people, who don’t know what the hell to make of LLMs, ChatGPT, and all the other generative AI systems that have appeared. Their creators haven’t helped, perhaps partly because they don’t know what these things are for either. OpenAI offers no framing for ChatGPT, presenting it as an experiment to help “make AI systems more natural to interact with,” a worthwhile but deeply unambitious goal. Absent further structure, it’s no surprise that ChatGPT’s users frame their own creations as either existential threats or perfected accomplishments. Neither outcome is true, but both are also boring. Imagine worrying about the fate of take-home essay exams, a stupid format that everyone hates but nobody has the courage to kill. But likewise, imagine nitpicking with a computer that just composed something reminiscent of a medieval poem about a burger joint because its lines don’t all have the right meter! Sure, you can take advantage of that opportunity to cheat on school exams or fake your way through your job. That’s what a boring person would do. That’s what a computer would expect.

Computers have never been instruments of reason that can solve matters of human concern; they’re just apparatuses that structure human experience through a very particular, extremely powerful method of symbol manipulation. That makes them aesthetic objects as much as functional ones. GPT and its cousins offer an opportunity to take them up on the offer—to use computers not to carry out tasks but to mess around with the world they have created. Or better: to destroy it.

A Thoughtful Approach to Homework

Too often, homework can be meaningless busywork that stresses and overwhelms students and their families, crushes creativity, and has little impact on children's future success. In Waldorf education, we take a thoughtful, age-appropriate and balanced approach, where homework is introduced later, and is focused on meaningful assignments that foster creativity and further their understanding. Assignments will often include an artistic or project-based component as well. Our approach to homework is rooted in sparking students’ imagination and creativity, helping them to learn to articulate their understanding and viewpoint, and cultivating a strong love of learning. The aim is to ensure that students are leading healthy balanced lives that include time for rest, recreation, free play and family time.

Originally published in The Atlantic by Joe Pinsker

America has long had a fickle relationship with homework. A century or so ago, progressive reformers argued that it made kids unduly stressed, which later led in some cases to district-level bans on it for all grades under seventh. This anti-homework sentiment faded, though, amid mid-century fears that the U.S. was falling behind the Soviet Union (which led to more homework), only to resurface in the 1960s and ’70s, when a more open culture came to see homework as stifling play and creativity (which led to less). But this didn’t last either: In the ’80s, government researchers blamed America’s schools for its economic troubles and recommended ramping homework up once more.

The 21st century has so far been a homework-heavy era, with American teenagers now averaging about twice as much time spent on homework each day as their predecessors did in the 1990s. Even little kids are asked to bring school home with them. A 2015 study, for instance, found that kindergarteners, who researchers tend to agree shouldn’t have any take-home work, were spending about 25 minutes a night on it.

The 21st century has so far been a homework-heavy era, with American teenagers now averaging about twice as much time spent on homework each day as their predecessors did in the 1990s. Even little kids are asked to bring school home with them. A 2015 study, for instance, found that kindergarteners, who researchers tend to agree shouldn’t have any take-home work, were spending about 25 minutes a night on it.

But not without pushback. As many children, not to mention their parents and teachers, are drained by their daily workload, some schools and districts are rethinking how homework should work—and some teachers are doing away with it entirely. They’re reviewing the research on homework (which, it should be noted, is contested) and concluding that it’s time to revisit the subject.

Hillsborough, California, an affluent suburb of San Francisco, is one district that has changed its ways. The district, which includes three elementary schools and a middle school, worked with teachers and convened panels of parents in order to come up with a homework policy that would allow students more unscheduled time to spend with their families or to play. In August 2017, it rolled out an updated policy, which emphasized that homework should be “meaningful” and banned due dates that fell on the day after a weekend or a break.

“The first year was a bit bumpy,” says Louann Carlomagno, the district’s superintendent. She says the adjustment was at times hard for the teachers, some of whom had been doing their job in a similar fashion for a quarter of a century. Parents’ expectations were also an issue. Carlomagno says they took some time to “realize that it was okay not to have an hour of homework for a second grader—that was new.”

Most of the way through year two, though, the policy appears to be working more smoothly. “The students do seem to be less stressed based on conversations I’ve had with parents,” Carlomagno says. It also helps that the students performed just as well on the state standardized test last year as they have in the past.

Earlier this year, the district of Somerville, Massachusetts, also rewrote its homework policy, reducing the amount of homework its elementary and middle schoolers may receive. In grades six through eight, for example, homework is capped at an hour a night and can only be assigned two to three nights a week.

Jack Schneider, an education professor at the University of Massachusetts at Lowell whose daughter attends school in Somerville, is generally pleased with the new policy. But, he says, it’s part of a bigger, worrisome pattern. “The origin for this was general parental dissatisfaction, which not surprisingly was coming from a particular demographic,” Schneider says. “Middle-class white parents tend to be more vocal about concerns about homework … They feel entitled enough to voice their opinions.”

Schneider is all for revisiting taken-for-granted practices like homework, but thinks districts need to take care to be inclusive in that process. “I hear approximately zero middle-class white parents talking about how homework done best in grades K through two actually strengthens the connection between home and school for young people and their families,” he says. Because many of these parents already feel connected to their school community, this benefit of homework can seem redundant. “They don’t need it,” Schneider says, “so they’re not advocating for it.”

That doesn’t mean, necessarily, that homework is more vital in low-income districts. In fact, there are different, but just as compelling, reasons it can be burdensome in these communities as well. Allison Wienhold, who teaches high-school Spanish in the small town of Dunkerton, Iowa, has phased out homework assignments over the past three years. Her thinking: Some of her students, she says, have little time for homework because they’re working 30 hours a week or responsible for looking after younger siblings.

As educators reduce or eliminate the homework they assign, it’s worth asking what amount and what kind of homework is best for students. It turns out that there’s some disagreement about this among researchers, who tend to fall in one of two camps.

In the first camp is Harris Cooper, a professor of psychology and neuroscience at Duke University. Cooper conducted a review of the existing research on homework in the mid-2000s, and found that, up to a point, the amount of homework students reported doing correlates with their performance on in-class tests. This correlation, the review found, was stronger for older students than for younger ones.

This conclusion is generally accepted among educators, in part because it’s compatible with “the 10-minute rule,” a rule of thumb popular among teachers suggesting that the proper amount of homework is approximately 10 minutes per night, per grade level—that is, 10 minutes a night for first graders, 20 minutes a night for second graders, and so on, up to two hours a night for high schoolers.

In Cooper’s eyes, homework isn’t overly burdensome for the typical American kid. He points to a 2014 Brookings Institution report that found “little evidence that the homework load has increased for the average student”; onerous amounts of homework, it determined, are indeed out there, but relatively rare. Moreover, the report noted that most parents think their children get the right amount of homework, and that parents who are worried about under-assigning outnumber those who are worried about over-assigning. Cooper says that those latter worries tend to come from a small number of communities with “concerns about being competitive for the most selective colleges and universities.”

According to Alfie Kohn, squarely in camp two, most of the conclusions listed in the previous three paragraphs are questionable. Kohn, the author of The Homework Myth: Why Our Kids Get Too Much of a Bad Thing, considers homework to be a “reliable extinguisher of curiosity,” and has several complaints with the evidence that Cooper and others cite in favor of it. Kohn notes, among other things, that Cooper’s 2006 meta-analysis doesn’t establish causation, and that its central correlation is based on children’s (potentially unreliable) self-reporting of how much time they spend doing homework. (Kohn’s prolific writing on the subject alleges numerous other methodological faults.)

In fact, other correlations make a compelling case that homework doesn’t help. Some countries whose students regularly outperform American kids on standardized tests, such as Japan and Denmark, send their kids home with less schoolwork, while students from some countries with higher homework loads than the U.S., such as Thailand and Greece, fare worse on tests. (Of course, international comparisons can be fraught because so many factors, in education systems and in societies at large, might shape students’ success.)

Kohn also takes issue with the way achievement is commonly assessed. “If all you want is to cram kids’ heads with facts for tomorrow’s tests that they’re going to forget by next week, yeah, if you give them more time and make them do the cramming at night, that could raise the scores,” he says. “But if you’re interested in kids who know how to think or enjoy learning, then homework isn’t merely ineffective, but counterproductive.”

His concern is, in a way, a philosophical one. “The practice of homework assumes that only academic growth matters, to the point that having kids work on that most of the school day isn’t enough,” Kohn says. What about homework’s effect on quality time spent with family? On long-term information retention? On critical-thinking skills? On social development? On success later in life? On happiness? The research is quiet on these questions.

Another problem is that research tends to focus on homework’s quantity rather than its quality, because the former is much easier to measure than the latter. While experts generally agree that the substance of an assignment matters greatly (and that a lot of homework is uninspiring busywork), there isn’t a catchall rule for what’s best—the answer is often specific to a certain curriculum or even an individual student.

Given that homework’s benefits are so narrowly defined (and even then, contested), it’s a bit surprising that assigning so much of it is often a classroom default, and that more isn’t done to make the homework that is assigned more enriching. A number of things are preserving this state of affairs—things that have little to do with whether homework helps students learn.

Jack Schneider, the Massachusetts parent and professor, thinks it’s important to consider the generational inertia of the practice. “The vast majority of parents of public-school students themselves are graduates of the public education system,” he says. “Therefore, their views of what is legitimate have been shaped already by the system that they would ostensibly be critiquing.” In other words, many parents’ own history with homework might lead them to expect the same for their children, and anything less is often taken as an indicator that a school or a teacher isn’t rigorous enough. (This dovetails with—and complicates—the finding that most parents think their children have the right amount of homework.)

Barbara Stengel, an education professor at Vanderbilt University’s Peabody College, brought up two developments in the educational system that might be keeping homework rote and unexciting. The first is the importance placed in the past few decades on standardized testing, which looms over many public-school classroom decisions and frequently discourages teachers from trying out more creative homework assignments. “They could do it, but they’re afraid to do it, because they’re getting pressure every day about test scores,” Stengel says.

Second, she notes that the profession of teaching, with its relatively low wages and lack of autonomy, struggles to attract and support some of the people who might reimagine homework, as well as other aspects of education. “Part of why we get less interesting homework is because some of the people who would really have pushed the limits of that are no longer in teaching,” she says.

“In general, we have no imagination when it comes to homework,” Stengel says. She wishes teachers had the time and resources to remake homework into something that actually engages students. “If we had kids reading—anything, the sports page, anything that they’re able to read—that’s the best single thing. If we had kids going to the zoo, if we had kids going to parks after school, if we had them doing all of those things, their test scores would improve. But they’re not. They’re going home and doing homework that is not expanding what they think about.”

“Exploratory” is one word Mike Simpson used when describing the types of homework he’d like his students to undertake. Simpson is the head of the Stone Independent School, a tiny private high school in Lancaster, Pennsylvania, that opened in 2017. “We were lucky to start a school a year and a half ago,” Simpson says, “so it’s been easy to say we aren’t going to assign worksheets, we aren’t going assign regurgitative problem sets.” For instance, a half-dozen students recently built a 25-foot trebuchet on campus.

Simpson says he thinks it’s a shame that the things students have to do at home are often the least fulfilling parts of schooling: “When our students can’t make the connection between the work they’re doing at 11 o’clock at night on a Tuesday to the way they want their lives to be, I think we begin to lose the plot.”

When I talked with other teachers who did homework makeovers in their classrooms, I heard few regrets. Brandy Young, a second-grade teacher in Joshua, Texas, stopped assigning take-home packets of worksheets three years ago, and instead started asking her students to do 20 minutes of pleasure reading a night. She says she’s pleased with the results, but she’s noticed something funny. “Some kids,” she says, “really do like homework.” She’s started putting out a bucket of it for students to draw from voluntarily—whether because they want an additional challenge or something to pass the time at home.

Chris Bronke, a high-school English teacher in the Chicago suburb of Downers Grove, told me something similar. This school year, he eliminated homework for his class of freshmen, and now mostly lets students study on their own or in small groups during class time. It’s usually up to them what they work on each day, and Bronke has been impressed by how they’ve managed their time.

In fact, some of them willingly spend time on assignments at home, whether because they’re particularly engaged, because they prefer to do some deeper thinking outside school, or because they needed to spend time in class that day preparing for, say, a biology test the following period. “They’re making meaningful decisions about their time that I don’t think education really ever gives students the experience, nor the practice, of doing,” Bronke said.

The typical prescription offered by those overwhelmed with homework is to assign less of it—to subtract. But perhaps a more useful approach, for many classrooms, would be to create homework only when teachers and students believe it’s actually needed to further the learning that takes place in class—to start with nothing, and add as necessary.